Since output is a tensor of dimension, we need to tell PyTorch that we want the softmax computed over the right-most dimension. softmax, log_softmax, kl_div) 计算技巧 (一) 技术标签: 深度学习 Pytorch Tensorflow 学习笔记 pytorch tensorflow 深度学习. max() function, to find out the maximum element of a Tensor. But this mechanism is conceptually confusing too, because unless you know the behind-the-scenes details, you would likely try to apply softmax() activation on your neural network output nodes and then feed that result to the 2) torch. softmax_cross_entropy_with_logits 如出一辙 Functional metrics¶ Similar to torch.

Here’s an example: import torch x = torch. The LogSoftmax formulation can be simplified as: The LogSoftmax formulation can be simplified as: nn_log_softmax ( dim ) Softmax classification with cross-entropy (2/2) This tutorial will describe the softmax function used to model multiclass classification problems. softmax(input, dim=None, _stacklevel=3) But when I run: torch. The most common way of ensure that the weights are a valid probability distribution (all values are non-negative and they sum to 1) is to use the softmax function, defined for each sequence element as: You will implement max, softmax, and log softmax on tensors as well as the dropout and max-pooling operations.The denominator, or normalization constant, is also sometimes called the partition function (and its logarithm is called the log-partition function). randn(1,5,requires_grad=True) print(x) # x = F. Softmax Function หรือ SoftArgMax Function หรือ Normalized Exponential Function คือ ฟังก์ชันที่รับ Input เป็น Vector ของ Logit Softmax as class members and use them in the forward method. , learning discrete policies where certain actions are known a-priori to be invalid. By voting up you can indicate which examples are most useful and appropriate. Like the sigmoid function, every value of softmax function is between 0 and 1, and a small change to any of the input scores will result in a change to all of the output values. tau – non-negative scalar temperature torch. This function will invisibly apply log_softmax() and then call the NLLLoss(x,y) function behind the scenes.Now, in terms of code, a step looks like this: py and ensure that you understand its gradient computation.

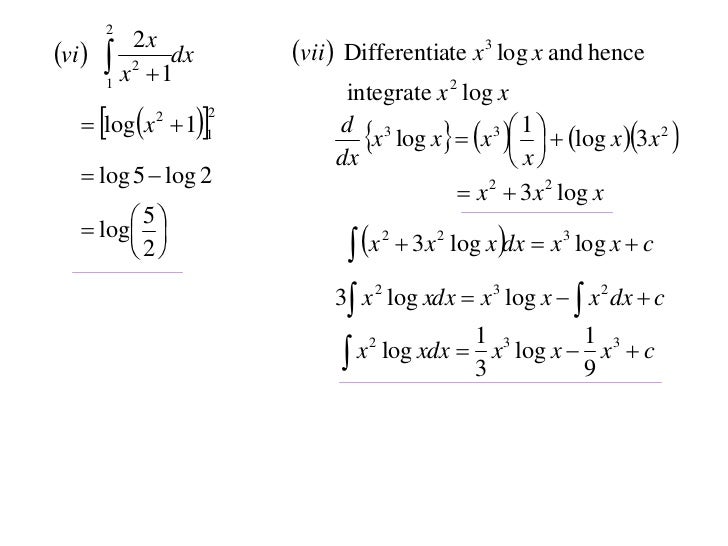

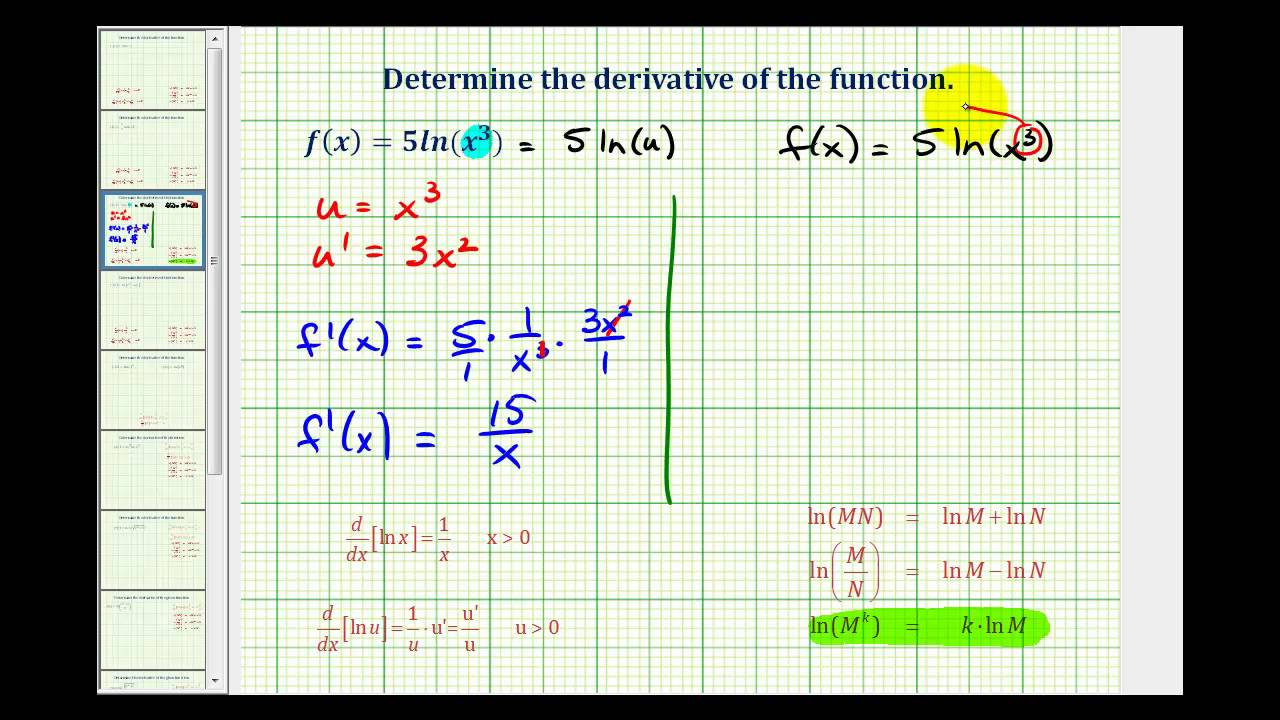

Derivative of log x 2 how to#

This article records softmax CNN's output becomes the probability How to fix "RuntimeError: Function AddBackward0 returned an invalid gradient at index 1 - expected type torch. Learn the math behind these functions, and when and how to use them in PyTorch.

This notebook breaks down how `cross_entropy` function is implemented in pytorch, and how it is related to softmax, log_softmax, and NLL (negative log-likelihood).Torch functional softmax softmax (Python function, in torch.